Bounding the Heavy-tailed DGNSS Error by Leveraging Membership Weights Analysis of Gaussian Mixture Model

Published in Pacific PNT 2024, 2024

1) What problem is this paper solving?

Context: Developing error bounds for heavy-tailed DGNSS error distributions.

Core contribution: A partitioning strategy based on the convexity of BGMM tail membership weights.

Achieved goal: A theoretically sound, unimodal, and symmetric overbound.

2) Why is this paper important?

What changed: Integrity monitoring requires rigorous proof that bounds hold after convolution.

Problem created: Arbitrary overbounds may lose their bounding property when combined.

Why current solutions fail: They often lack the structural properties (unimodality, symmetry) needed for convolution.

3) How does this paper solve it?

Contribution 1: Analyzes the membership weight of the tail component in a Bimodal GMM.

Contribution 2: Proves convexity and uses it to partition the distribution into core and tail.

Key result: Constructed a bound that is preserved through convolution, facilitating integrity monitoring.

🎯 Takeaway: Understanding the geometry of GMM weights allows for tighter, safer error bounds.

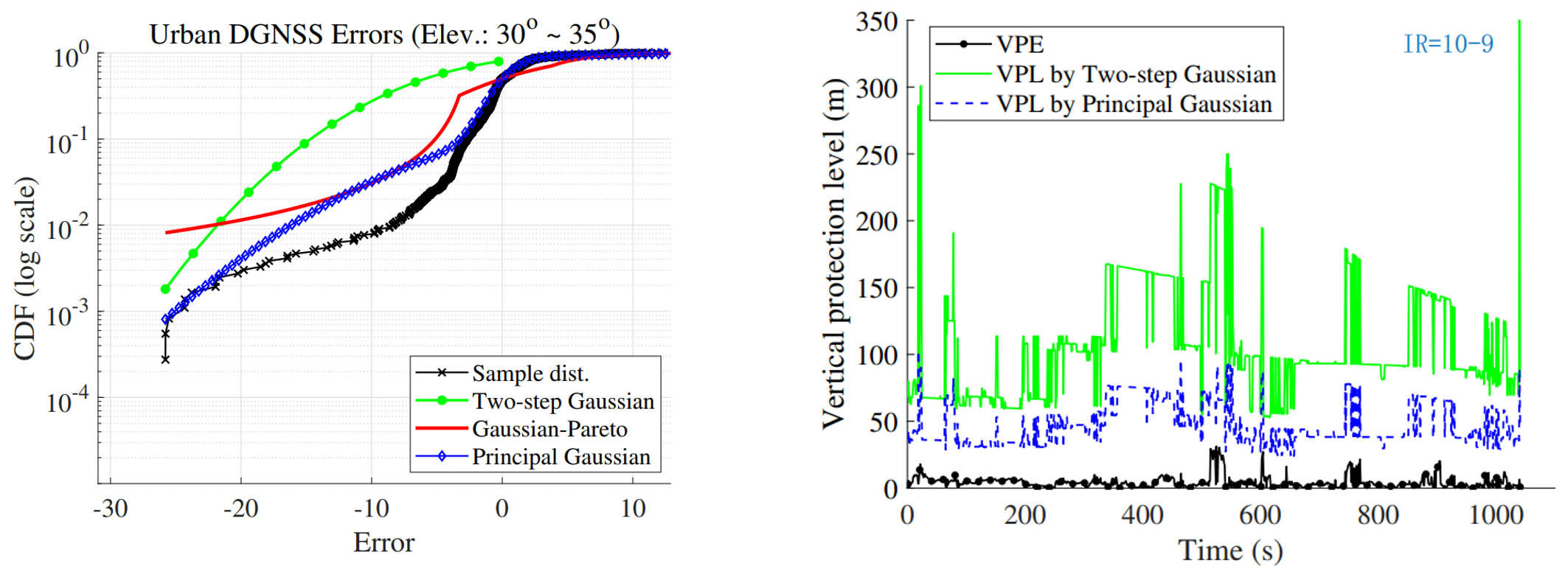

(a) The CDF (in logarithm scale) of the proposed method (Principal Gaussian overbound), the two-step Gaussian overbound, Gaussian-Pareto overbound for Urban DGNSS errors (heavy-tailed distribution); (b) The protection level of LS solution based on the proposed method (Principal Gaussian overbound) and the two-step Gaussian overbound when integrity risk is set as 10^-9.

(a) The CDF (in logarithm scale) of the proposed method (Principal Gaussian overbound), the two-step Gaussian overbound, Gaussian-Pareto overbound for Urban DGNSS errors (heavy-tailed distribution); (b) The protection level of LS solution based on the proposed method (Principal Gaussian overbound) and the two-step Gaussian overbound when integrity risk is set as 10^-9.

DOI: https://doi.org/10.33012/2024.19604

Recommended citation:

Yan, P., Zhong, Y., & Hsu, L. T. (2024, April). "Bounding the Heavy-Tailed Pseudorange Error by Leveraging Membership Weights Analysis of Gaussian Mixture Model". In Proceedings of the ION 2024 Pacific PNT Meeting (pp. 541-555).

BibTeX

@inproceedings{yan2024pnt,

author = {Yan, Penggao and Zhong, Yang and Hsu, Li-Ta},

title = {Bounding the Heavy-tailed Pseudorange Error by Leveraging Membership Weights Analysis of Gaussian Mixture Model},

booktitle = {Proceedings of the ION 2024 Pacific PNT Meeting},

year = {2024},

pages = {541--555}

}